What happens when an AI machine decides you are ‘easy going’?

When it comes to scoring your personality from 0 to 10—decimal points included—it’s rather hard to feel as though anything is accurate or authentic. ‘Ability to learn’, sure. Is 10 out of 10 too arrogant? 6.7 too low? What about 7.8? Now imagine that it isn’t you scoring yourself, but rather an AI system scoring you according to your social media profiles, CV or even just your name, email or phone number. Welcome to DeepSense, a big data science company working with AI to predict things. Predict what exactly, you may ask? For example, how likely your potential employees are to maintain stability in the workplace.

I entered DeepSense as an ‘employer looking for a content writer’. I was then asked a few questions about the type of position I was looking to fill, and to select what score range I’d like this candidate to hit in fields such as ‘Attitude and Outlook’, ‘Stability Potential’ and ‘General Behaviour’, I was then asked to drop in a quick link to the potential candidate and let DeepSense do its magic. I of course added my own LinkedIn profile out of sheer intrigue to see what my own score would be, and whether indeed this AI thought I was suited for the job I anyway do.

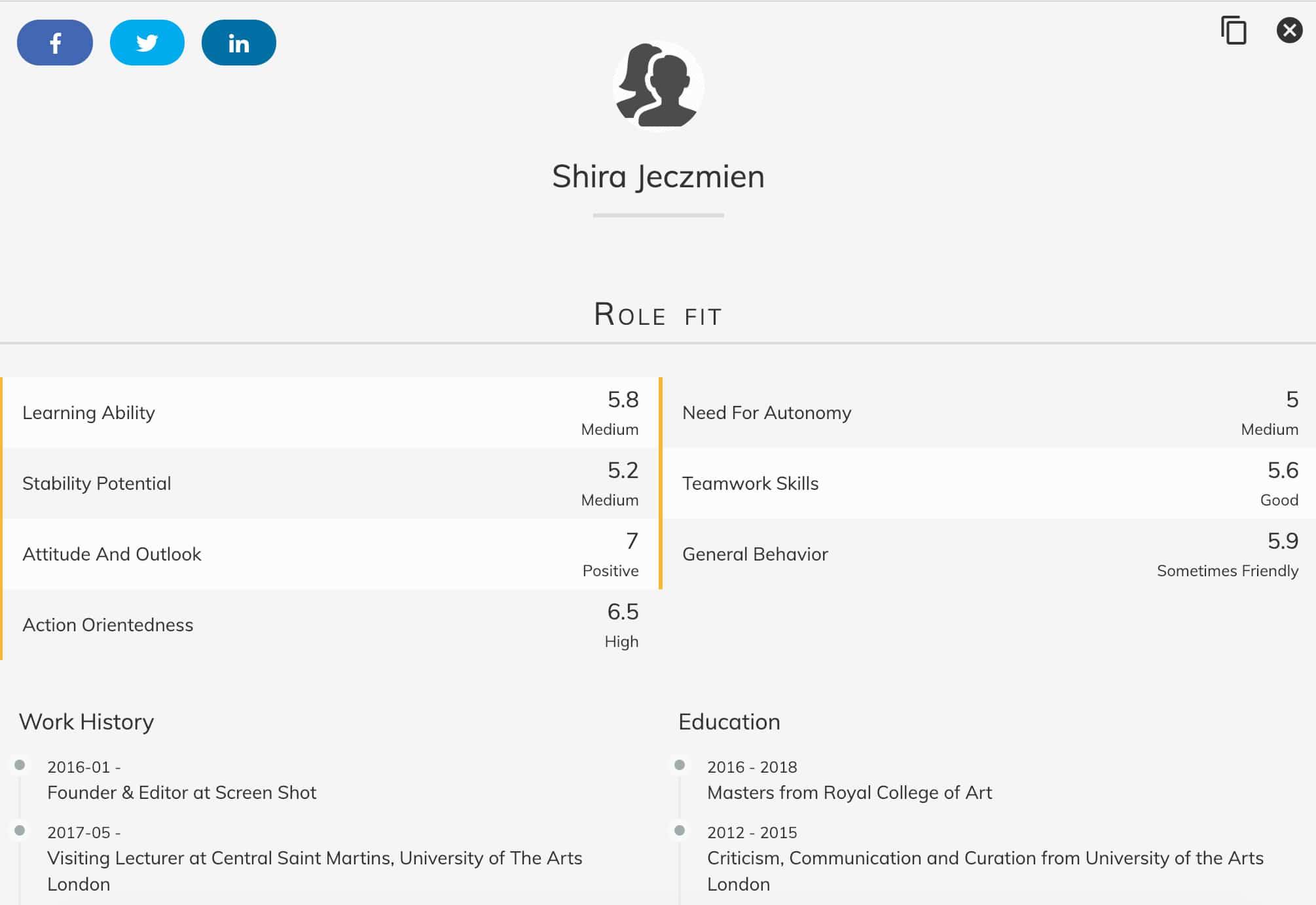

Within a mere number of seconds and a spinning of a 3D globe icon, my score was revealed. And the results were bleak. DeepSense had reduced my entire career, education, and skills into numerical scores that quite frankly were worse than I’d imagined. 5.2 for ‘Stability Potential’, 5.8 for ‘Learning Ability’, 5.9 for ‘General behaviour’ (what does that even mean?), and 5 for ‘Need for Autonomy’. Overall my match score for the ‘Content Writer’ position was at a 59 percent match. Not ideal.

Sure, I identify as slightly neurotic with a shorter temper than most, but I never looked at these traits as hindrances to my career nor my ability to deliver within a role. Having lived alone since the age of 18, endlessly hustling the creative industries and wiggling my way through back doors, founding my own company and managing a team of people, I thought that my ‘Stability Potential’ score would at least be hitting on an average. A 7, let’s say. But DeepSense was determined this was not the case.

At closer investigation into what DeepSense categorises as ‘General Behaviour’ I found that this means “The overall ability to get along with others despite personal challenges.” For ‘Stability Potential’, the AI company scores according to the person’s inclination “to give it their all before calling it quits”, and for ‘Need for Autonomy’ I found out that this means a person’s tendency “to work better independently”. What’s more is that DeepSense has summed me up in but a few sentences, writing “Shira can be friendly at times but does not hesitate from being critical when the situation so demands. Shira can be sceptical of what others have to say and can question them incessantly until Shira gets his/her answers”.

Now, I understand the potential appeal in a tool such as this. It can, in theory, help recruiters eliminate their own personal biases while at the same time scan through thousands of applications that represent but a small number of candidates who actually match the desired role. In an interview with the Verge, co-creator of DeepSense Amarpreet Kalkat, says that personality is often what determines a good candidate and that the AI developed by DeepSense is here to conclude just that. When asked whether AI is fit to determine a person’s character, Kalkat answers, “From a relative point of view, how accurate is human judgement?”.

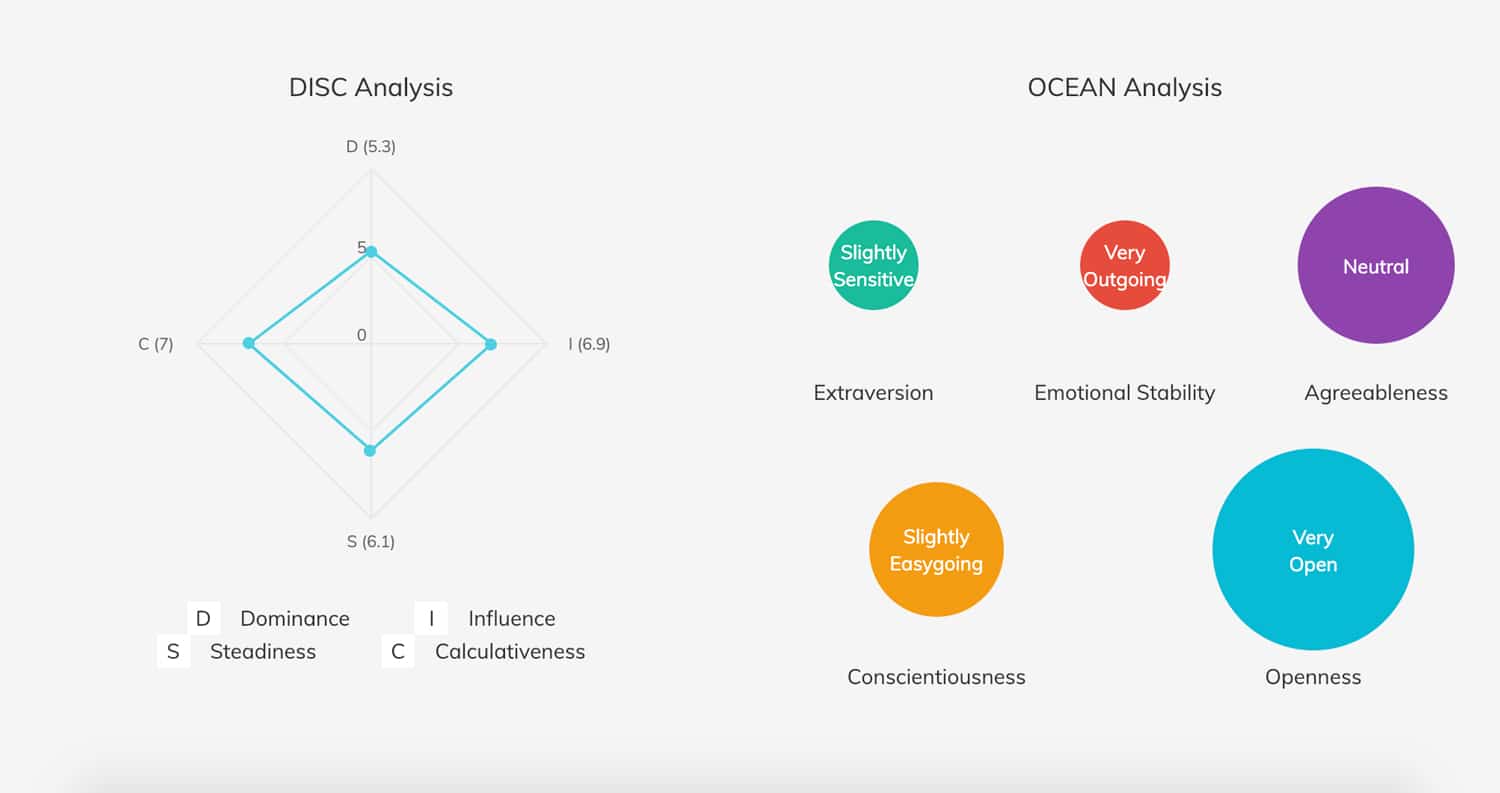

Whether or not AI systems will heavily enter into the recruitment field is no longer a question of if, or when. They are here and for the long run. The workforce is growing, jobs are becoming more specialised and with that, the need to vet through applications is largely understandable. The question instead needs to be whether or not AI should be used to replace human judgement of character and more importantly—if it is able to predict a person’s character as DeepSense claims to—is it ethical to use this prediction for or against a person? All I know is that it did not feel great nor accurate to be reduced to ‘Slightly Easygoing’ and ‘Slightly Sensitive’.