I tried booking one of Bolt’s helicopter rides

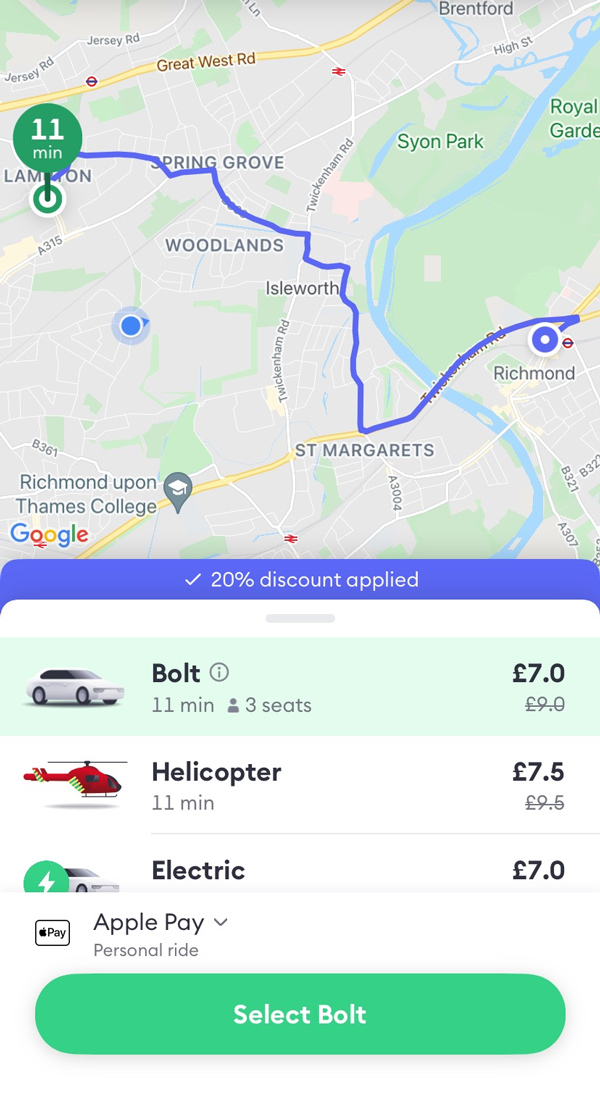

If you think Uber is just taking the mick with its prices and you’ve become an avid Bolt user like me instead, you’ll have probably noticed a little red helicopter pop up as a new ride option.So, what the hell is it? After seeing a video with nearly 3 million views circulating on TikTok of a UK user attempting to book a ride, followed by helicopter sounds which could be heard circling over the content creator, I just had to see for myself. For just a few pounds over a normal car ride price, it seemed too good to be true.

Bolt’s new addition had the internet stumped with one Twitter user writing, “me and my mate just checked taxis we could get to this rave in Brixton and one of the options on Bolt is a helicopter and it’s only £1 more than a car? Hang on, what?” Another wrote, “Can anyone tell me why Bolt is saying I can get a helicopter to East Croydon for £5?” Another simply asked, “Has anyone [even] tried the helicopter on Bolt?” And so, like the gullible fool I was, I attempted to book a helicopter…fact check guys, it’s not real—obviously. And here’s to the gentle reminder to not believe everything you see on TikTok.

What is this new feature then? While you can’t catch a helicopter to lunch just yet, the reason behind the new addition is actually a charitable one. The new ride option is part of a collaboration between Bolt and London’s Air Ambulance Charity (LAA) to honour its dedicated and heroic trauma care service for London civilians, which has spanned over 32 years. The charity is heavily reliant on donations from the public in order to cover its running costs and so Bolt’s initiative—which became available from 23 August 2021—aims to aid London’s Air Ambulance in raising money for it through the car ride app.

Sharing on the company’s blog, Bolt stated that “London’s Air Ambulance via Bolt is a special ride-type in our app which works just like a normal Bolt ride.” It’s still in a car, “the only difference is that our rides will include an extra 50p cost which is directly given to London’s Air Ambulance Charity as a donation… Along the way, we’ll [also] be sharing more information about the vital work that London’s Air Ambulance Charity does.”

The special in-app option will run until the end of Air Ambulance week, which falls between 6 and 12 September 2021 but won’t be the last charitable initiative taken by the car ride company—with Bolt pledging a year of fundraising events. In a first-time partnership for both parties, the Bolt logo will “also appear on the iconic red helicopter, designating Bolt as an official corporate partner.”

CEO of LAA, Jonathan Jenkins, stated, “We are extremely grateful to Bolt for their generous support of the charity, especially following the pandemic. This contribution will help us save more lives in London and will also help to raise awareness of London’s Air Ambulance Charity throughout the capital. Thank you to all of the team at Bolt, we are delighted to be your first London charity partner.”

The reasoning behind the partnership was not only a dedication and appreciation to LAA but Bolt’s way of saying thank you to Londoners themselves. Bolt’s UK regional manager, Sam Raciti, said “London has been such a welcoming home to Bolt for two years that our team wanted to say a proper thanks.” He continued, “Like other charities, LAA has faced a difficult 18 months—but the amazing paramedics and doctors have continued to serve London and save lives throughout, and we’re delighted to play our part in helping to keep the red helicopters flying.”

So while you might not get to fly, next time you need a ride, select Bolt’s helicopter option to help somebody else too—you’ll feel better about the fact that you’ve taken yet another 5 minute ride instead of a walk, trust me.